Beyond Accuracy: Auditing Allocative Harms in Facial-Gesture Recognition for People with Motor Impairments

2026. Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems.

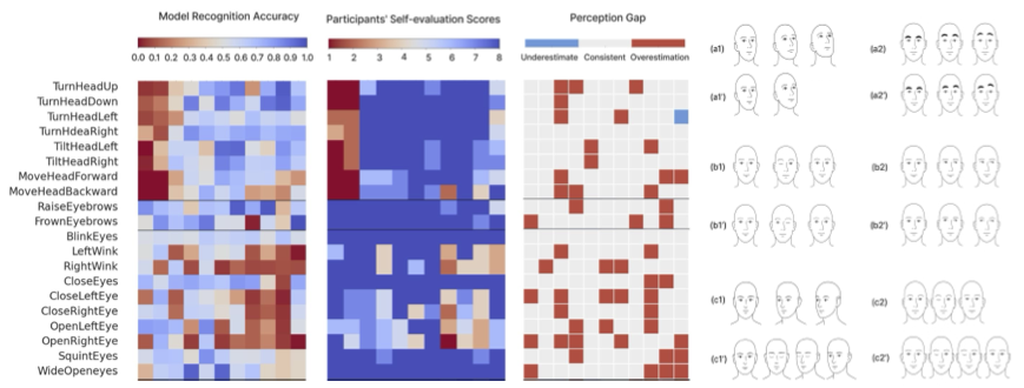

Camera-based facial-gesture interfaces offer hands-free access for people with motor impairments (PwM), yet most recognition models are trained on able-bodied data and implicitly assume normative motor control and proprioception. We conducted a mixed-methods empirical study of 37 above-neck gestures performed by 11 PwM and 11 non-impaired participants. Results reveal systematic mismatches between user intention and model recognition in the PwM group, stemming from diverse patterns of body perception and control and leading to allocative harms. These mismatches concentrated in low-amplitude, asymmetric, and directional gestures. Building on these findings, we introduce FairGesture, a diagnostic auditing method for quantifying and interpreting such mismatches. FairGesture combines (1) the Perception Gap metric, (2) trajectory-based motion analysis, and (3) an analysis of user sensorimotor feedback, exploring reasons of mismatches. The work reframes accuracy in gesture recognition as a problem of sensorimotor alignment, advancing user-centered evaluation and inclusive model design.

Full paper

https://dl.acm.org/doi/full/10.1145/3772318.3791927

Citation

Zhang, S., Gu, Y., Cater, K., & Metatla, O. (2026). Beyond accuracy: auditing allocative harms in facial-gesture recognition for people with motor impairments. Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems (pp. 1–24).

BibTeX

@inproceedings{zhang2026beyond, title={Beyond Accuracy: Auditing Allocative Harms in Facial-Gesture Recognition for People with Motor Impairments}, author={Zhang, Siyu and Gu, Yelu and Cater, Kirsten and Metatla, Oussama}, booktitle={Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems}, pages={1--24}, year={2026} }